|

3/23/2023 0 Comments Gradient descent

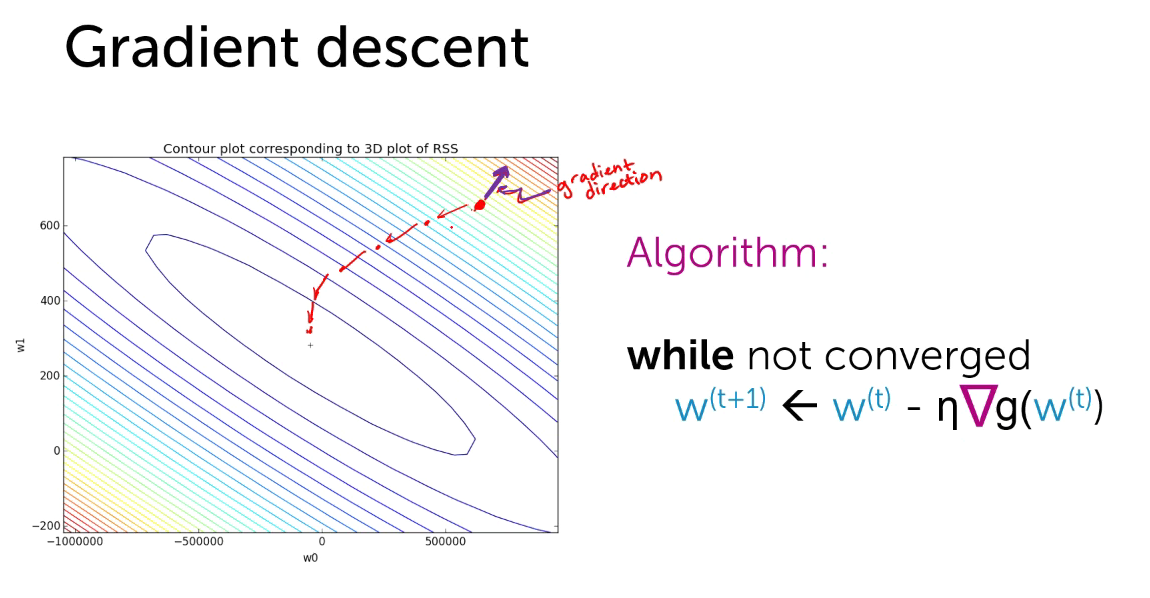

The argument that controls the size of the update is called the “ learning rate” and we’ll represent it as “alpha”.Ĭoefficient = coefficient – (alpha * delta) We’ll update the coefficients based on the previous coefficients minus the appropriate change in value as determined by the direction (delta) and an argument that controls the magnitude of change (the size of our step). This means we can update the coefficients in the neural network parameters and hopefully reduce the loss. We’ve now determined which direction is downhill towards the point of lowest loss. We’ll represent the appropriate direction as “delta”. Getting the derivative of the loss will tell us which direction is up or down the slope, by giving us the appropriate sign to adjust our coefficients by. We then calculate the derivative, or determine the slope. If we represent the loss function as “f”, then we can state that the equation for calculating the loss is as follows (we’re just running the coefficients through our chosen cost function): We determine the loss by running the coefficients through the loss function. In calculus, the derivative just refers to the slope of a function at a given point, so we’re basically just calculating the slope of the hill based on the loss function.

We’ll use the cost function to determine the derivative.

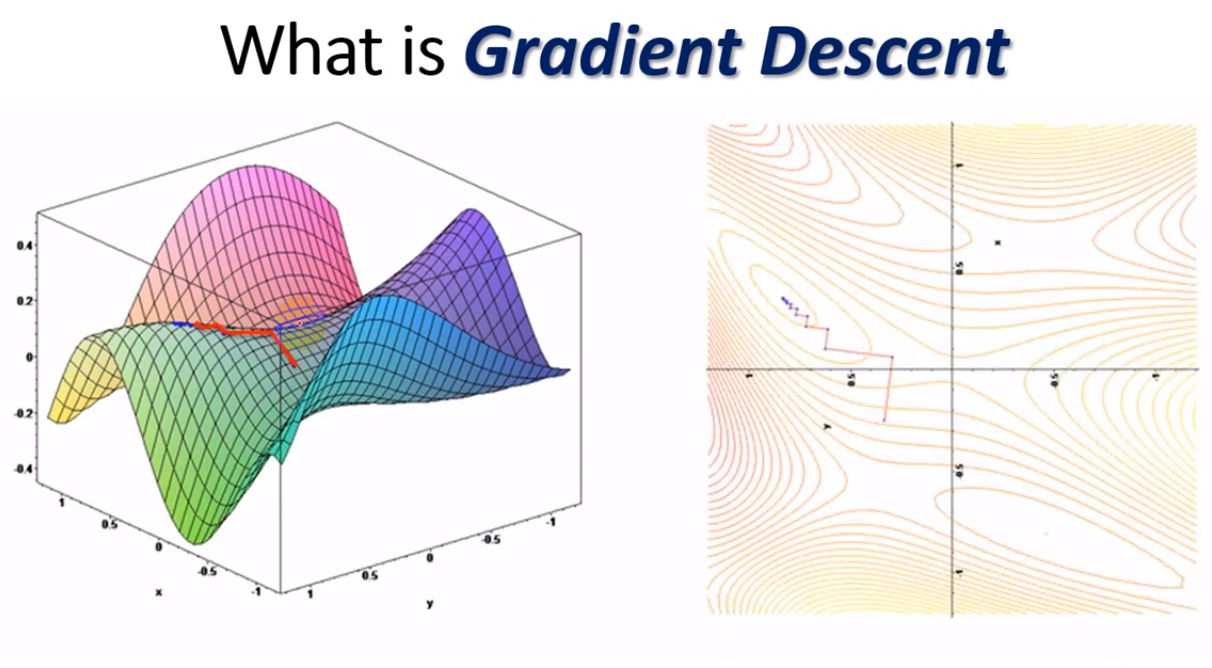

In order to calculate the gradient, we need to know the loss/cost function. In order to carry out gradient descent, the gradients must first be calculated. Photo: Роман Сузи via Wikimedia Commons, CCY BY SA 3.0 () Gradient descent starts at a place of high loss and by through multiple iterations, takes steps in the direction of lowest loss, aiming to find the optimal weight configuration. Now we know that gradients are instructions that tell us which direction to move in (which coefficients should be updated) and how large the steps we should take are (how much the coefficients should be updated), we can explore how the gradient is calculated. In the context of descending a hill towards a point of lowest loss, the gradient is a vector/instructions detailing the path we should take and how large our steps should be. Similarly, it’s possible that when adjusting the weights of the network, the adjustments can actually take it further away from the point of lowest loss, and therefore the adjustments must get smaller over time. However, as we get closer to the lowest point in the valley, our steps will need to become smaller, or else we could overshoot the true lowest point. When we start at the top of the hill we can take large steps down the hill and be confident that we are heading towards the lowest point in the valley. We want to get to the bottom of the hill and find the part of the valley that represents the lowest loss. Let’s shift the metaphor just slightly and imagine a series of hills and valleys. We can move down the slope towards less error by calculating a gradient, a direction of movement (change in the parameters of the network) for our model. The steepness of the slope/gradient represents how fast the model is learning.Ī steeper slope means large reductions in error are being made and the model is learning fast, whereas if the slope is zero the model is on a plateau and isn’t learning. The relationship between these two things can be graphed as a slope, with incorrect weights producing more error. A gradient is just a way of quantifying the relationship between error and the weights of the neural network. We want to move from the top of the graph down to the bottom. The bottom of the graph represents the points of lowest error while the top of the graph is where the error is the highest. What Are Gradients?Īssume that there is a graph that represents the amount of error a neural network makes. You don’t need to know a lot of calculus to understand how gradient descent works, but you do need to have an understanding of gradients. Gradient descent takes the initial values of the parameters and uses operations based in calculus to adjust their values towards the values that will make the network as accurate as it can be. It’s used to improve the performance of a neural network by making tweaks to the parameters of the network such that the difference between the network’s predictions and the actual/expected values of the network (referred to as the loss) is a small as possible. Gradient descent is an optimization algorithm. However, gradient descent can be a little hard to understand for those new to machine learning, and this article will endeavor to give you a decent intuition for how gradient descent operates. Gradient descent is the primary method of optimizing a neural network’s performance, reducing the network’s loss/error rate. If you’ve read about how neural networks are trained, you’ve almost certainly come across the term “gradient descent” before.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed